Learning About Self-Interest and Cooperation from AI Matrix Game Social Dilemmas

Putting an artificial intelligence agent in charge of complex facets of human life involves specific goals to be worked towards. What happens when there are multiple artificial intelligence agents within a larger system that are managing the economy, traffic and other environmental challenges and trying to meet each of their own specific goals?

If I have the goal to do something, yet you have a goal to do something else and they are not mutually inclusive but clash and conflict with each other, that creates a problem. We can either compete in some way where someone ends up coming out as the winner and someone else the loser to get what they want, or we can cooperate for both our benefits.

This has been studied in the prisoner’s dilemma to find out how rational self-interest through cooperation doesn’t always win out over a less rational self-interest that ignores cooperation.

In an effort to better understand the motives for self-interest, five research scientist from Google’s deep mind have authored a paper called Multi-agent Reinforcement Learning in Sequential Social Dilemmas, where they wanted to better understand how agents interact and arrive at cooperative behavior, and also when they turn on each other. They used Matrix Game Social Dilemmas to do that.

Two games were developed by the researchers called Gathering and Wolfpack are both employing a two-dimensional grid game engine. Deep reinforcement learning tries to get artificial intelligence agents to maximize their cumulative long-term rewards through trial and error interactions.

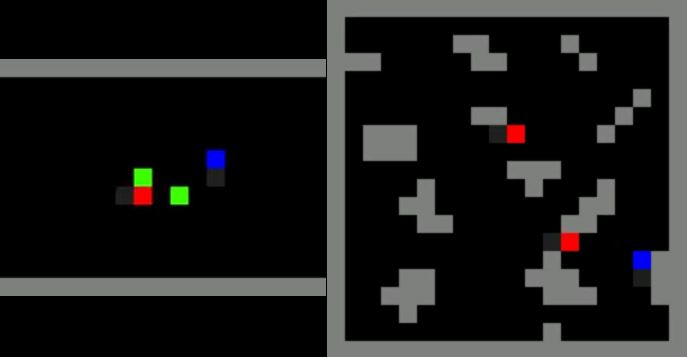

One game, Gathering, has two agents, red and blue, try to collect green apples, with the option of tagging another agent represented by a yellow line. The agents receive one reward for each apple. They can tag a yellow line that appears along their current orientation. The player that is hit by the tag been twice is removed from the game for a certain number of frames. When any player uses the tag option, no rewards are delivered to either player.

When there were enough apples to be divided between the two agents, they coexisted peacefully. When the supply of apples goes down the agents learn to defect and start using the tag feature in order to get more apples for themselves by trying to eliminate the other player from the competition.

The researchers manipulated the spawn rate the apples came back and the spawn rate of an eliminated player, they could control the cost of potential conflict that the agents evaluated for engaging in certain behavior.

The conclusion of the Gathering game predicts that conflicts emerge from competition due to scarcity, while conflict is less likely to happen when there is an abundance of resources.

Here is the first game, Gathering:

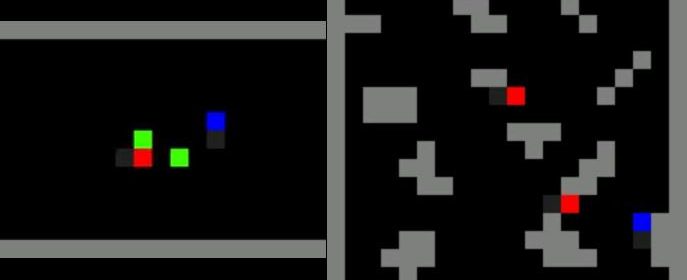

The second game is Wolfpack, with red wolves chasing a blue dot while they avoid gray obstacles. If any wealth touches the prey all the wolves in the capture radius get a reward. The reward the capturing player receives is dependent on how many other worlds are in the capture radius. Capturing it alone can be riskier as you need to defend the carcass on your own, whereas working together can lead to a higher reward.

Rewards are then issued based on being alone, or working with the team in cooperation. The more another agent was present, the higher likelihood of learning to cooperate and implementing complex strategies.

Here is the second game, Wolfpack:

What’s interesting is that the two different types of games represented two different types of situations that resulted in two different tendencies to emerge.

In the Gathering game, increasing the amount of players in the network leads to an increase in an agent’s tendency to defect. In the Wolfpack game it was the opposite, where the greater network size of players leads to less defection and greater cooperation.

These types of studies will help artificial intelligence developers and social managers develop computer systems that can effectively interface into a complex multi-agent system. If one AI is tasked with managing traffic in the city, while another is tasked with reducing carbon emissions for the state or country, they need to interact in a cooperative manner rather than compete for their own goals or objectives in isolation.

References:

- AI researchers get a sense of how self-interest rules

- Multi-agent Reinforcement Learning in Sequential Social Dilemmas

- Can Steemit attention-economy be a “non-competitive” society?

Thank you for your time and attention! I appreciate the knowledge reaching more people. Take care. Peace.